I don’t think that casting a range of bits as some other arbitrary type “is a bug nobody sees coming”.

C++ compilers also warn you that this is likely an issue and will fail to compile if configured to do so. But it will let you do it if you really want to.

That’s why I love C++

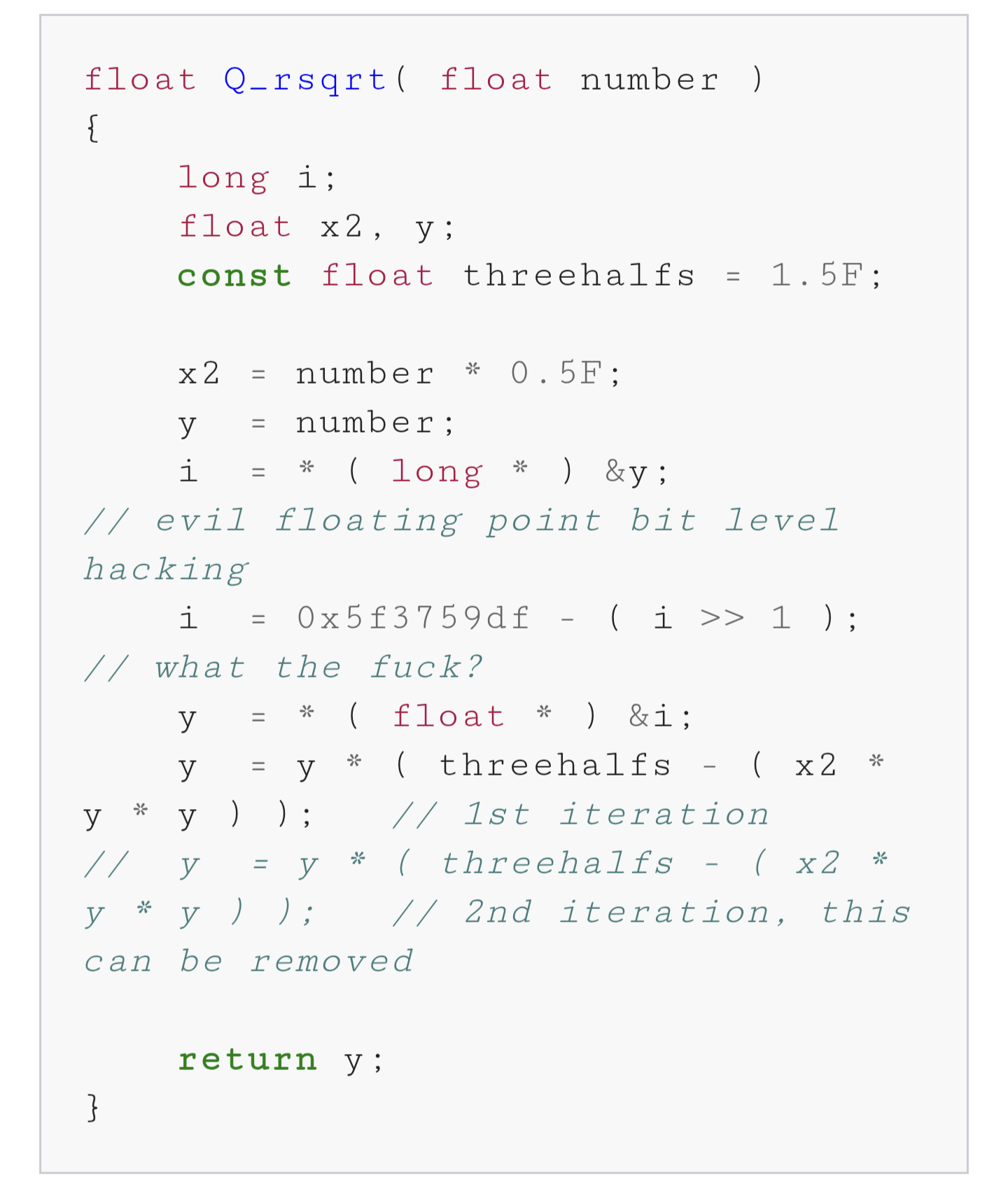

What do you mean I’m not supposed to add 0x5f3759df to a float casted as a long, bitshifted right by 1?

//what the fuck?They know. It’s a comment from the code.

C lets you shoot yourself in the foot.

C++ lets you reuse the bullet.

C is dangerous like your uncle who drinks and smokes. Y’wanna make a weedwhacker-powered skateboard? Bitchin’! Nail that fucker on there good, she’ll be right. Get a bunch of C folks together and they’ll avoid all the stupid easy ways to kill somebody, in service to building something properly dangerous. They’ll raise the stakes from “accident” to “disaster.” Whether or not it works, it’s gonna blow people away.

C++ is dangerous like a quiet librarian who knows exactly which forbidden tomes you’re looking for. He and his… associates… will gladly share all the dark magic you know how to ask about. They’ll assure you that the power cosmic would never, without sufficient warning, pull someone inside-out. They don’t question why a loving god would allow the powers you crave. They will show you which runes to carve, and then, they will hand you the knife.

Why use a strongly typed language at all, then?

Sounds unnecessarily restrictive, right? Just cast whatever as whatever and let future devs sort it out.

$myConstant = ‘15’;

$myOtherConstant = getDateTime();

$buggyShit = $myConstant + $myOtherConstant;Fuck everyone who comes after me for the next 20 years.

I actually do like that C/C++ let you do this stuff.

Sometimes it’s nice to acknowledge that I’m writing software for a computer and it’s all just bytes. Sometimes I don’t really want to wrestle with the ivory tower of abstract type theory mixed with vague compiler errors, I just want to allocate a block of memory and apply a minimal set rules on top.

100%. In my opinion, the whole “build your program around your model of the world” mantra has caused more harm than good. Lots of “best practices” seem to be accepted without any quantitative measurement to prove it’s actually better. I want to think it’s just the growing pains of a young field.

Even with qualitative measurements they can do stupid things.

For work I have to write code in C# and Microsoft found that null reference exceptions were a common issue. They actually calculated how much these issues cost the industry (some big number) and put a lot of effort into changing the language so there’s a lot of warnings when something is null.

But the end result is people just set things to an empty value instead of leaving it as null to avoid the warnings. And sure great, you don’t have null reference exceptions because a value that defaulted to null didn’t get set. But now you have issues where a value is an empty string when it should have been set.

The exception message would tell you exactly where in the code there’s a mistake, and you’ll immediately know there’s a problem and it’s more likely to be discovered by unit tests or QA. Something that’s an value that’s supposed to be set may not be noticed for a while and is difficult to track down.

So their research indicated a costly issue (which is ultimately a dev making a mistake) and they fixed it by creating an even more costly issue.

There’s always going to be things where it’s the responsibility of the developer to deal with, and there’s no fix for it at the language level. Trying to fix it with language changes can just make things worse.

People just think that applying arbitrary rules somehow makes software magically more secure, like with rust, as if the compiler won’t just “let you” do the exact same fucking thing if you type the

unsafekeywordYou don’t need

unsafeto write vulnerable code in rust.

“C++ compilers also warn you…”

Ok, quick question here for people who work in C++ with other people (not personal projects). How many warnings does the code produce when it’s compiled?

I’ve written a little bit of C++ decades ago, and since then I’ve worked alongside devs who worked on C++ projects. I’ve never seen a codebase that didn’t produce hundreds if not thousands of lines of warnings when compiling.

I mostly see warnings when compiling source code of other projects. If you get a warning as a dev, it’s your responsibility to deal with it. But also your risk, if you don’t. I made it a habit to fix every warning in my own projects. For prototyping I might ignore them temporarily. Some types of warnings are unavoidable sometimes.

If you want to make yourself not ignore warnings, you can compile with

-Werrorif using GCC/G++ to make the compiler a pedantic asshole that doesn’t compile until you fix every fucking warning. Not advisable for drafting code, but definitely if you want to ship it.A production code should never have any warning left. This is a simple rule that will save a lot of headaches.

I put -Werror at the end of my makefile cflags so it actually treats warnings as errors now.

0 in our case, but we are pretty strict. Same at the first place I worked too. Big tech companies.

You shouldn’t have any warnings. They can be totally benign, but when you get used to seeing warnings, you will not see the one that does matter.

Ideally? Zero. I’m sure some teams require “warnings as errors” as a compiler setting for all work to pass muster.

In reality, there’s going to be odd corner-cases where some non-type-safe stuff is needed, which will make your compiler unhappy. I’ve seen this a bunch in 3rd party library headers, sadly. So it ultimately doesn’t matter how good my code is.

There’s also a shedload of legacy things going on a lot of the time, like having to just let all warnings through because of the handful of places that will never be warning free. IMO its a way better practice to turn a warning off for a specific line.. Sad thing is, it’s newer than C++ itself and is implementation dependent, so it probably doesn’t get used as much.

Depends on the age of the codebase, the age of the compiler and the culture of the team.

I’ve arrived into a team with 1000+ warnings, no const correctness (code had been ported from a C codebase) and nothing but C style casts. Within 6 months, we had it all cleaned up but my least favourite memory from that time was “I’ll just make this const correct; ah, right, and then this; and now I have to do this” etc etc. A right pain.

So, did you get it down to 0 warnings and manage to keep it there? Or did it eventually start creeping up again?

Once we were at zero warnings, we enabled warnings as errors, despite the protestation of the grognards on the team.

There are no medals waiting for you by writing overly clever code. Trust me, I’ve tried. There’s no pride. Only pain.

It really depends on your field. I’m doing my master’s thesis in HPC, and there, clever programming is really worth it.

But it will let you do it if you really want to.

Now, I’ve seen this a couple of times in this post. The idea that the compiler will let you do anything is so bizarre to me. It’s not a matter of being allowed by the software to do anything. The software will do what you goddamn tell it to do, or it gets replaced.

WE’RE the humans, we’re not asking some silicon diodes for permission. What the actual fuck?!? We created the fucking thing to do our bidding, and now we’re all oh pwueez mr computer sir, may I have another ADC EAX, R13? FUCK THAT! Either the computer performs like the tool it is, or it goes the way of broken hammers and lawnmowers!

Yeah, but there’s some things computers are genuinely better at than humans, which is why we code in the first place. I totally agree that you shouldn’t ever be completely controlled by your machine, but strong nudging saves a lot of trouble.

I used to love C++ until I learned Rust. Now I think it is obnoxious, because even if you write modern C++, without raw pointers, casting and the like, you will be constantly questioning whether you do stuff right. The spec is just way too complicated at this point and it can only get worse, unless they choose to break backwards compatibility and throw out the pre C++11 bullshit

Depending on what I’m doing, sometimes rust will annoy me just as much. Often I’m doing something I know is definitely right, but I have to go through so much ceremony to get it to work in rust. The most commonly annoying example I can think of is trying to mutably borrow two distinct fields of a struct at the same time. You can’t do it. It’s the worst.